Ask any senior engineer about the moment they truly understood distributed systems, or caching, or why you never trust client-side input — and they won’t describe reading a book. They’ll describe a night. A specific, awful night where something broke in production in a way they didn’t anticipate, and they had to sit with that brokenness, alone, until they’d battered their way through to the other side of it.

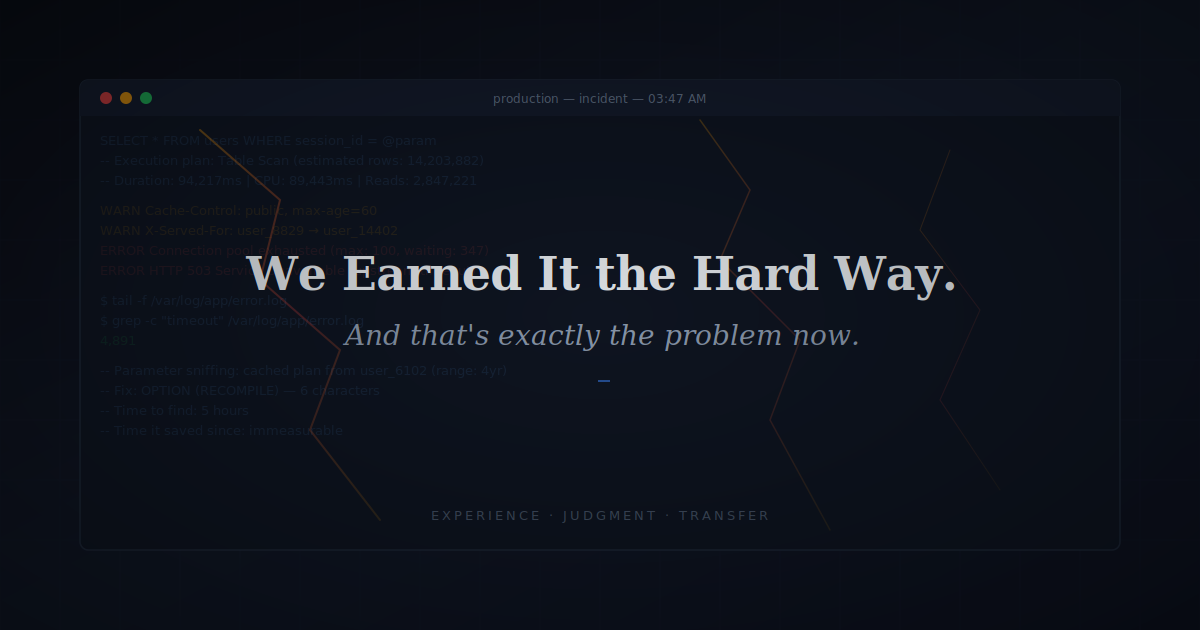

Every one of us has a story like that. A query that ran perfectly in development and brought production to its knees because the optimiser cached the wrong execution plan. A page caching layer that worked flawlessly until someone realised it was serving one user’s personal data to strangers. A deployment so innocuous it didn’t warrant a second look — until the alerts started firing. The details vary. The shape is always the same: something you didn’t know you didn’t know made itself violently clear, and the scar it left changed how you worked forever after.

That is how most of us learned. Not efficiently. Not cleanly. But deeply. The struggle wasn’t a flaw in the engineering education process. It was the education process. Every compiler error agonised over, every stack trace misread and re-read, every poorly designed system maintained long enough to understand why it was poorly designed — all of it was building something. Not just skill. Judgment. The ability to look at a system and feel, before you can articulate it, that something is off.

AI has removed most of that struggle. And we need to talk honestly about what left with it.

What the struggle actually built

When we say “experience,” we treat it like a quantity — years logged, technologies touched, projects shipped. But experience isn’t a counter. It’s a collection of scars, and each one marks the boundary of something you didn’t understand until it cost you something.

Think about what those hard years actually deposited into us. The reflex to ask “what happens when this fails?” — not from a checklist, but from the gut, because you were the one sitting in the ruins. A feel for what “scalable” actually means, not the definition, but the visceral memory of watching something buckle at ten times the expected load. The ability to spot over-engineering because you wrote that clever abstraction once and maintained it eighteen months later. The patience to debug under pressure because you’ve been in enough crises to know that panic produces nothing useful.

None of that is in any documentation. It was built entirely through trial, failure, and the consequences of being wrong in ways that mattered.

The illusion of competence

Here’s the part that worries me. A junior engineer today can produce output that looks, on the surface, indistinguishable from the work of someone with five years of experience. The code is clean. The tests pass. The architecture holds together.

But correct and robust are not the same thing. Correct means it works under the conditions you thought of. Robust means it survives the ones you didn’t. That query that performs beautifully against a thousand rows and collapses when it meets ten million? That page cache that looks perfect until it starts leaking session data across users? You learn to ask about those things because you got burned by them, not because someone told you to check.

A junior engineer growing up with AI assistance will reach a senior title on a normal timeline. Their velocity will be high. Their output will look polished. What will be invisible is the layer underneath — the intuition that comes from struggling through problems when no tool could solve them for you.

That gap won’t show up in any performance metric. It’ll show up on a bad day, when they’re the most experienced person in the room and the system is doing something nobody expected and there’s no model to ask.

Now let me push back on myself

I want to be honest about something: not all of that old suffering was educational. Some of it was just waste.

Spending three days debugging a memory leak because the tooling was primitive didn’t always make me a better engineer. Sometimes it just made me tired. Fighting with a terrible build system didn’t teach me systems thinking — it taught me patience with bad tools, which is a different and less valuable thing. There’s a real risk of romanticising inefficiency just because it’s what we went through.

The honest version is this: some of the struggle was education, and some of it was just friction that happened to exist alongside education. AI is removing both, and the challenge is that we can’t easily separate which was which. The safest assumption is that the important lessons — the ones about failure modes, about systems under stress, about the gap between “works” and “works reliably” — those still need to be learned through some form of difficulty. The rest? Maybe it’s fine that it’s gone.

The responsibility this puts on us

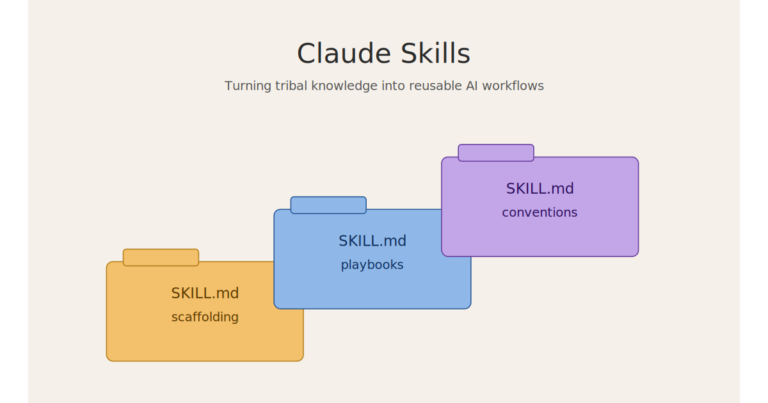

If the productive struggle was the teacher and the struggle has been largely removed, then we have to become the teacher. The knowledge that used to be earned through pain now has to be transferred through intention.

Practically, that means changing how we mentor and review. Stop reviewing code for correctness — the AI handles that — and start reviewing for understanding. Ask the junior whether they know why it’s correct, and what happens when conditions change. Tell your failure stories, and be specific. The texture of a real incident — the wrong turns, the wasted hours, the embarrassing simplicity of the root cause — carries information that no training set contains.

Ask the questions experience taught you to ask automatically. What happens at ten times the load? What does the query plan actually look like, and what happens when the optimiser makes a different choice? What is in this response that’s user-specific, and what happens if it gets served to someone else? Who maintains this in two years? What if this dependency goes down? Those questions feel obvious to us only because we paid for them.

And when a junior’s solution has a subtle architectural problem, resist the urge to just fix it. Describe the conditions under which it breaks. Let them work out why. That’s recreating the productive friction, in a low-stakes setting, that AI removed from the high-stakes one.

This matters because the junior engineers entering the field now will become the senior engineers and architects of systems we can’t predict. They will encounter genuinely novel failures and will need to reason through them from first principles, under pressure, without a precedent to reach for. That capacity isn’t a product of having good tools. It’s a product of having been forced, at some point, to think without them.

The AI will write the code. It’s our job to make sure someone still understands it.